Parser.add_argument('-inputFilePath', type=str, default='gs://test-bucket-13/Input/Adress.csv')

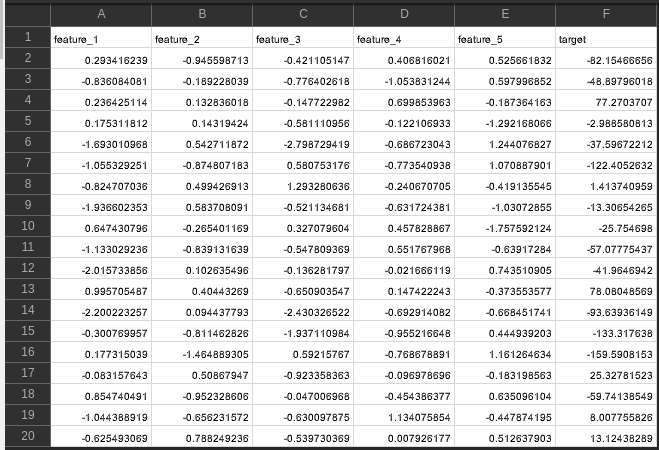

You can download your secured.json from the Service account page,īelow is the logic using the pandas library, which converts CSV files to JSON files. Os.environ = 'secured.json'Ībove enviornment variable can be set using automated technqiues like deployment pipeline etc. If you have created a dedicated dataflow service account then this account can be configured to use in the Dataflow pipeline using Python code as below, Setting up security credentials – GOOGLE_APPLICATION_CREDENTIALS The output shall be generated in the below output folder. Please configure the Data flow job for the properties example -JobName, Region, Input file location, output file location, template location, etc In any of the above cases, you need to configure the various properties which are discussed below. OR you can use your own custom templates. You can get started with Google-provided templates for a basic understanding of the workflow. Please click on the file and copy its location as highlighted.Īll the files located in the bucket will be denoted as common folder naming as below example,Ĭonfiguring the Dataflow Job can be done using the GCP console or using the UI Dataflow interface.ĭataflow csv to json conversion python using templates I have a sample file as shown below which we shall upload to the GCP environment.īelow I have created the “ Input” folder within the Bucket where the file is located. Let’s upload the sample CSV file to the google GCP bucket. Set up the Service account google project and add the required permission/role required as per the need.If running the application locally using IDE -Please install the package the same way Example- console CLI in VS Code.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed